HIPAA, GDPR, and Voice Dictation: A Compliance Guide

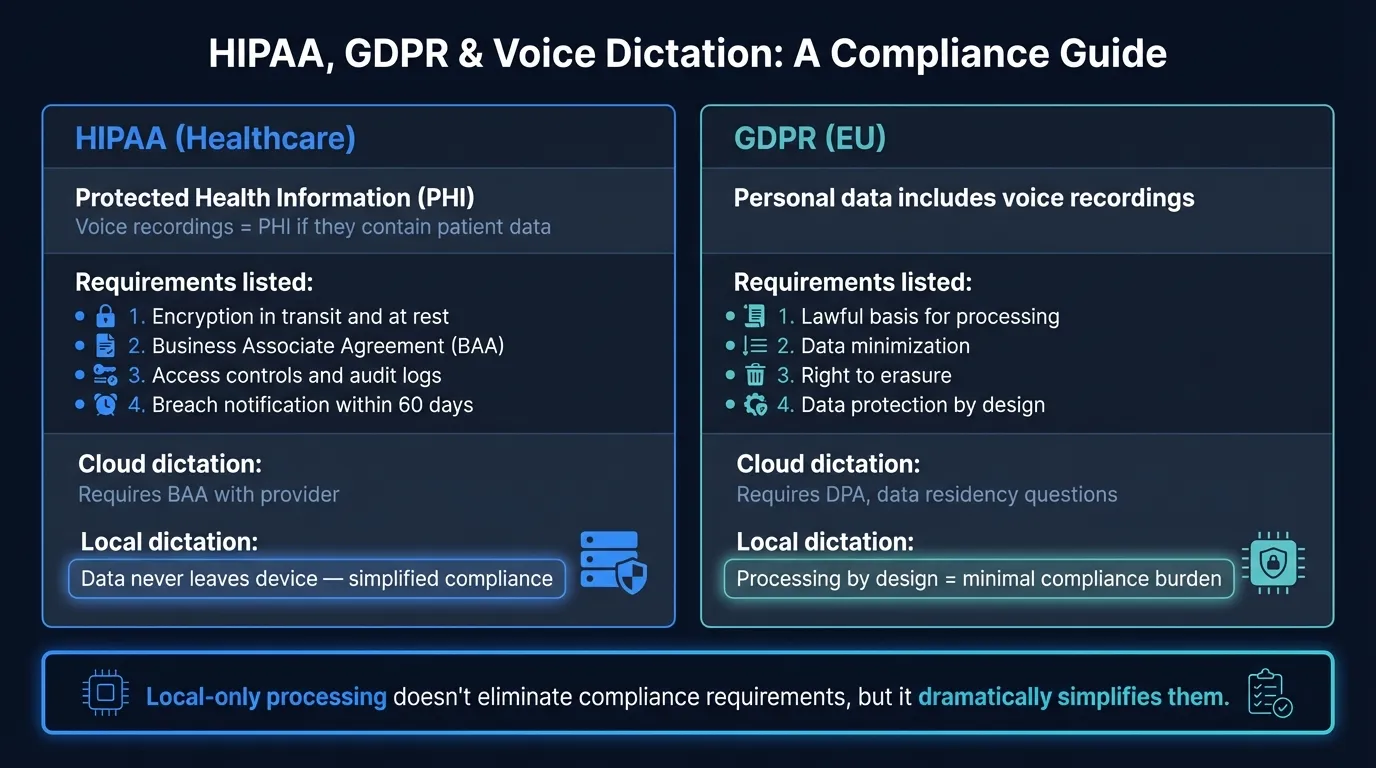

Using cloud dictation with patient or client data may violate HIPAA and GDPR. Here's what the regulations require — and how local processing changes the picture.

In 2016, a New Jersey transcription company called Best Medical Transcription uploaded dictated physician notes to an FTP server with no authentication required. Patient records — names, diagnoses, treatment details — sat on a publicly accessible server for months. When the breach was discovered, the vendor paid $200,000 in civil penalties and was barred from operating. Virtua Medical Group, the covered entity that hired them, paid an additional $417,816. Both parties faced penalties because both failed their HIPAA obligations.

This case is a useful illustration of how the cloud dictation compliance problem actually works in practice: the risk isn't only about what the transcription service does with audio. It's about whether the legal framework is in place before audio ever gets transmitted.

HIPAA-compliant dictation means voice data containing Protected Health Information is handled by a vendor that has signed a Business Associate Agreement, implemented required technical safeguards, and agreed not to use PHI for purposes beyond the contracted service. Most consumer-grade dictation apps don't meet this standard. Many professionals in healthcare, legal, and financial services are using non-compliant tools today without realizing it.

Here's how cloud, enterprise, and local dictation compare on key compliance requirements:

When voice dictation triggers HIPAA#

HIPAA's Privacy Rule applies to Protected Health Information — individually identifiable health data maintained or transmitted by a covered entity or its business associates. Under 45 CFR §160.103, voice recordings explicitly qualify. The regulation lists "biometric identifiers, including finger and voice prints" as one of the 18 categories of PHI identifiers. Audio recordings that contain health information about an identifiable person are PHI.

When that audio is digitized — transmitted, stored, or processed by software — it becomes electronic PHI (ePHI), which must also satisfy the HIPAA Security Rule's technical safeguards under 45 CFR §164.312.

In practice, any dictated clinical note, physician letter, therapy session recording, or insurance documentation that names or identifies a patient is PHI. A doctor dictating a progress note. A nurse documenting a care plan. A medical biller describing a claim. All PHI.

Who is a covered entity: healthcare providers that transmit health information electronically, health plans, and healthcare clearinghouses. Their business associates — anyone who creates, receives, maintains, or transmits PHI on their behalf — are bound by HIPAA even if they're not healthcare organizations themselves. A transcription vendor is a business associate. So is the company whose servers process your dictation.

The BAA requirement#

Before any cloud vendor can touch PHI, the covered entity must have a signed Business Associate Agreement in place (45 CFR §164.308(b) and §164.504(e)). A BAA is not just a checkbox. It's a contract that:

- Specifies permitted uses and disclosures of PHI

- Prohibits the vendor from using PHI beyond the contracted service

- Requires the vendor to implement HIPAA Security Rule safeguards

- Requires breach notification within 60 days of discovery

- Requires the vendor to ensure its own subcontractors sign BAAs

No BAA, no compliant cloud dictation — full stop. This is true regardless of the app's security features. A cloud speech-to-text service can encrypt audio in transit and at rest, delete recordings immediately after transcription, and pass every technical audit. Without a BAA, a covered entity cannot legally route PHI through it.

Dragon Medical One (Nuance/Microsoft) is the dominant HIPAA-compliant clinical dictation platform. It includes a Business Associate Addendum as part of its EULA and states that original voice recordings are not retained after transcription — only the transcribed text and custom vocabulary profiles are stored. The trade-off is cost: Dragon Medical One runs $79–$99 per user per month depending on contract length, plus an implementation fee of around $525.

Some general-purpose dictation apps also offer BAAs — typically on enterprise plans at additional cost. What most do not offer: a BAA on their standard consumer subscription. Wispr Flow, for instance, does not publish a BAA for standard accounts. Google Workspace Speech-to-Text offers a BAA as part of Google Workspace Business and Enterprise tiers. Consumer Google products do not.

If you're in healthcare and using a standard-tier cloud dictation app to document patient care, check whether your vendor has signed a BAA. If they haven't, they can't.

What cloud tools do with medical audio#

Even when a BAA is in place, the technical details matter.

A standard cloud dictation workflow sends audio from your device to the vendor's servers, where a speech recognition model runs inference and returns a transcription. The audio file exists on the vendor's infrastructure during this process. What happens next depends on the vendor's retention policy.

Most enterprise medical dictation vendors (Dragon Medical One, M*Modal, DeepScribe) state they delete audio after transcription and do not use it for model training. Their contracts include language prohibiting secondary use of PHI. But the audio still transits their network. If their infrastructure is compromised during that window, or if a subprocessor they use has weaker controls, the exposure exists.

Consumer-grade apps have weaker protections. Wispr Flow's standard privacy policy states that without enabling Privacy Mode, dictation data "may be used to evaluate, train and improve Flow's features and AI models." Apple's Siri sends audio to Apple servers and retains it; the 2019 whistleblower report revealed contractors reviewing recordings. These apps are not appropriate for PHI.

The compliance burden for cloud dictation is ongoing: vendor audits, BAA renewals when contracts change, tracking subprocessors, monitoring data retention practices. Technical safeguard requirements under 45 CFR §164.312 are currently being updated — HHS proposed eliminating the "addressable vs. required" distinction in January 2025, which would make encryption of ePHI in transit and at rest fully mandatory rather than "addressable." The final rule is expected in mid-2026.

How GDPR applies to voice data#

For organizations operating in Europe or processing data from EU residents, GDPR creates a parallel set of obligations.

Voice recordings are personal data under GDPR Article 4(1). A voice is identifiable — accent, cadence, pitch, and vocal characteristics can confirm who a person is. Any organization processing voice recordings of EU data subjects needs a lawful basis under Article 6.

The more demanding analysis applies when voice data is processed specifically to identify a speaker. GDPR Article 9 defines biometric data — "personal data resulting from specific technical processing relating to the physical, physiological or behavioural characteristics of a natural person, which allow or confirm the unique identification" — as special category data, processing of which is prohibited unless an Article 9(2) exception applies. If a dictation app extracts voice fingerprints or runs speaker identification, Article 9 applies. For straightforward speech-to-text that isn't using audio to identify speakers, the general Article 6 lawfulness analysis governs.

What processors must do#

A cloud transcription service receiving EU data is a data processor under GDPR Article 28. The contract between your organization and the transcription vendor must be a Data Processing Agreement (DPA) that requires the vendor to:

- Process data only on documented instructions

- Implement appropriate technical and organizational security measures

- Obtain permission before engaging sub-processors

- Assist with data subject rights requests (access, erasure, portability)

- Delete or return all data at the end of the contract

No DPA, no compliant EU data processing. The parallel to HIPAA's BAA requirement is direct.

Data transfers to US servers#

This is where EU-based healthcare workers and law firms face a complication that US organizations don't. GDPR Chapter V restricts transfers of personal data to countries without an EU adequacy decision. The United States has conditional adequacy under the EU-US Data Privacy Framework (adopted July 2023), but only for US companies certified under the Framework's principles. If your cloud dictation vendor is certified, SCCs may not be required. If they're not, Standard Contractual Clauses and a Transfer Impact Assessment documenting the US legal environment around government access are required.

The practical implication: before using a US-based cloud dictation app with EU client or patient data, verify whether the vendor is EU-US Data Privacy Framework certified. If they're not, using them may constitute an unlawful data transfer.

Documented enforcement: In May 2022, the Polish Data Protection Authority fined the Warsaw Centre for Intoxicated Persons for audio recording without a valid legal basis — a violation of GDPR Articles 5(1)(a) and 6(1). The fine was 10,000 PLN (approximately EUR 2,200). Small, but the principle is established: audio recording without lawful basis is an active enforcement area across EU member states.

Your Voice, Your Mac, Your Data

Hearsy processes everything on-device. Your voice never leaves your Mac — not even for a millisecond.

What local processing changes#

When a dictation app processes audio entirely on-device, the technical architecture of the compliance problem changes.

HIPAA: If audio never reaches a third-party server, there is no business associate relationship for the transcription service itself. The vendor who makes the app has no access to PHI. There is no transmission to encrypt, no server-side retention to audit, no BAA to negotiate. The covered entity still has obligations — device security, access controls, proper handling of transcribed text — but the cloud dictation compliance surface disappears.

This is not a policy claim. It's verifiable with a network monitor. Run Little Snitch while using an on-device dictation app during transcription. Zero outbound connections means zero audio transmission. The PHI exists only on the device of the person who dictated it.

GDPR: No transmission means no third-country data transfer. No sub-processor means no DPA required with the dictation vendor. Voice data processed on-device never enters the EU-US data transfer analysis.

Hearsy uses NVIDIA Parakeet TDT 0.6B v2 for English (under 50ms, 1.69% word error rate on the LibriSpeech clean benchmark — NVIDIA, 2025) or OpenAI Whisper Large V3 for 99 languages (2.7% WER — OpenAI, 2023). Both models run in RAM on Apple Silicon. During transcription, no audio leaves the device.

Compliance comparison#

| Factor | Cloud dictation (enterprise) | Cloud dictation (consumer) | Local dictation |

|---|---|---|---|

| BAA available for HIPAA | Yes (typically enterprise tier) | No | Not required — no PHI transmission |

| DPA available for GDPR | Yes (typically) | Rarely | Not required — no data processed by vendor |

| Audio transmitted to third party | Yes | Yes | No |

| Audio retained on vendor servers | Varies by policy | Often yes | No — never transmitted |

| US-EU data transfer compliance needed | Yes | Yes | No |

| Compliance depends on vendor's practices | Yes | Yes | No |

| Works in air-gapped environments | No | No | Yes |

Who needs to act now#

Healthcare providers dictating clinical notes, referrals, discharge summaries, or patient communications. If you're using a consumer speech-to-text app for any of this, you need either a BAA-backed cloud solution or a local processing app. The Best Medical Transcription case shows regulators will pursue both covered entities and their vendors.

Law firms handling confidential client communications. Attorney-client privilege extends to the content of your communications, not to the infrastructure they pass through. A cloud dictation service that retains audio or uses it for model training has copies of privileged strategy discussions. Under GDPR, law firms are data controllers with full accountability obligations for how client data is processed.

Financial advisors and investment firms. MiFID II firms are required to record certain client communications and retain them for 5-7 years — which creates specific legal basis under GDPR Article 6(1)(c), but does not remove the obligation to secure those recordings. Under GDPR, firms must inform clients what is recorded, why, and how long it's kept. Using cloud dictation for client notes without a DPA with the vendor is a compliance gap.

Therapists and mental health professionals. Session content is among the most sensitive data that exists. Cloud processing of therapy notes means sensitive health information has been transmitted to a third-party server, regardless of what that server does with it.

Evaluating a dictation tool for compliance#

Before using any dictation tool with regulated data, check these:

1. Does the vendor offer a BAA (for HIPAA)? Ask directly. If the answer is "yes, on enterprise plans only," get the pricing. If the answer is no, the tool cannot legally be used with PHI.

2. Does the vendor offer a DPA (for GDPR)? Same question. Most enterprise SaaS companies have standard DPAs on request.

3. Where is audio processed? Ask whether transcription happens on-device or on their servers. If servers, ask where those servers are located.

4. What is the audio retention policy? Ask whether audio is deleted after transcription, and whether it's used for model training. Get this in writing in the BAA or DPA.

5. Can you verify processing location independently? For local apps: airplane mode test. Put the device in airplane mode and try to dictate. If it works, processing is local. For a more thorough test, use a network monitor during transcription to see whether any outbound connections are made.

6. For GDPR transfers: is the vendor EU-US Data Privacy Framework certified? Check the DPF participant list at dataprivacyframework.gov. Certification means the vendor has committed to specific privacy obligations for EU data; it simplifies the transfer analysis significantly.

Local processing doesn't eliminate all compliance obligations. You're still responsible for securing your device, managing access to transcribed text, and meeting your organization's data handling policies. But it removes the set of obligations that makes cloud dictation complicated in regulated industries — because those obligations all flow from a single decision: sending audio to someone else's server.

For the technical background on how on-device AI speech recognition works, see the on-device AI speech recognition guide. For the privacy comparison between cloud and local dictation more broadly, see voice data privacy. For offline capability in air-gapped or restricted network environments, see offline dictation software.

Frequently asked questions#

Is cloud dictation software HIPAA compliant?#

Cloud dictation tools can be HIPAA compliant, but it requires more than good security practices — the vendor must sign a Business Associate Agreement (BAA) before any Protected Health Information can be processed. Most consumer-grade apps (Google Docs Voice Typing, standard Wispr Flow, Siri) do not offer BAAs and cannot be used with patient data. Enterprise medical dictation platforms like Dragon Medical One do include BAAs. Without a signed BAA, using cloud dictation with PHI is a HIPAA violation regardless of the app's encryption or data retention practices.

What happens if you use a non-compliant dictation app with patient data?#

HIPAA violations carry civil monetary penalties ranging from $100 to $50,000 per violation depending on culpability, with an annual maximum of $1.9 million per category. In the Best Medical Transcription case (2016), the transcription vendor paid $200,000 in penalties and was dissolved; the covered entity (Virtua Medical Group) paid $417,816. Both parties faced consequences because HIPAA obligations apply to covered entities and their business associates. Penalties can reach regulators through patient complaints, OCR audits, or breach notifications.

Does GDPR apply to voice recordings?#

Yes. Voice recordings are personal data under GDPR Article 4(1) because a voice can identify an individual. Any organization that processes voice recordings of EU residents must have a lawful basis under GDPR Article 6. If recordings are processed specifically to identify a speaker (voice biometrics), they become special category data under Article 9, requiring explicit consent or another specific exception. Organizations sending EU voice data to servers outside the EU/EEA also need to comply with GDPR Chapter V transfer requirements — Standard Contractual Clauses or reliance on an adequacy decision like the EU-US Data Privacy Framework.

Does local dictation software simplify HIPAA compliance?#

Yes, significantly. When audio is processed entirely on-device, the audio never reaches a third-party server. There is no business associate relationship for the transcription itself, no BAA to negotiate, no server-side retention to audit, and no transmission to encrypt. The compliance obligations that make cloud dictation complex in healthcare all flow from the decision to send audio to an external server. Removing that transmission removes those obligations. Local apps like Hearsy and SuperWhisper process audio in RAM on your Mac. Nothing is transmitted during transcription.

Can I use Hearsy for compliant medical or legal dictation?#

Hearsy processes audio entirely on your Mac using local Whisper or Parakeet models. No audio is transmitted to external servers. For HIPAA purposes, Hearsy itself is not a business associate — it doesn't receive, maintain, or transmit PHI on your behalf, because it doesn't receive the audio at all (it runs locally on your device). For GDPR, there is no data transfer to a third country. Local processing does not replace your organization's broader HIPAA Security Rule obligations — you still need to secure the device, control access, and handle transcribed documents appropriately — but it removes the cloud transmission risk that makes most dictation compliance conversations necessary in the first place.

Ready to Try Voice Dictation?

Hearsy is free to download. No signup, no credit card. Just install and start dictating.

Download Hearsy for MacmacOS 14+ · Apple Silicon · Free tier available