On-Device AI Speech Recognition: How Apple Silicon Changed the Equation

On-device speech recognition now matches cloud accuracy on Apple Silicon. How the Neural Engine makes local AI transcription fast, private, and offline-capable.

"Local speech recognition can't match cloud accuracy." That was true in 2022. By 2025, on-device processing accounted for 59.47% of the AI speech recognition chip market — a majority of voice AI inference now happens on the device, not in a data center (SNS Insider, January 2026).

The shift happened because two things changed at once: the hardware got dramatically better, and the models got efficient enough to run on it.

On-device speech recognition is a category of AI inference where the speech model runs locally — in RAM on your CPU, GPU, or Neural Engine — rather than on a remote server. Audio is captured, processed by the model, and converted to text without any data leaving your device. The model downloads once; after that, transcription requires no network connection.

This isn't the same as offline storage. The key distinction is where computation happens. Cloud dictation transmits audio to a server that runs the model. On-device dictation runs the model where you are.

Here's how on-device speech recognition stacks up against cloud processing across the dimensions that matter:

The hardware story#

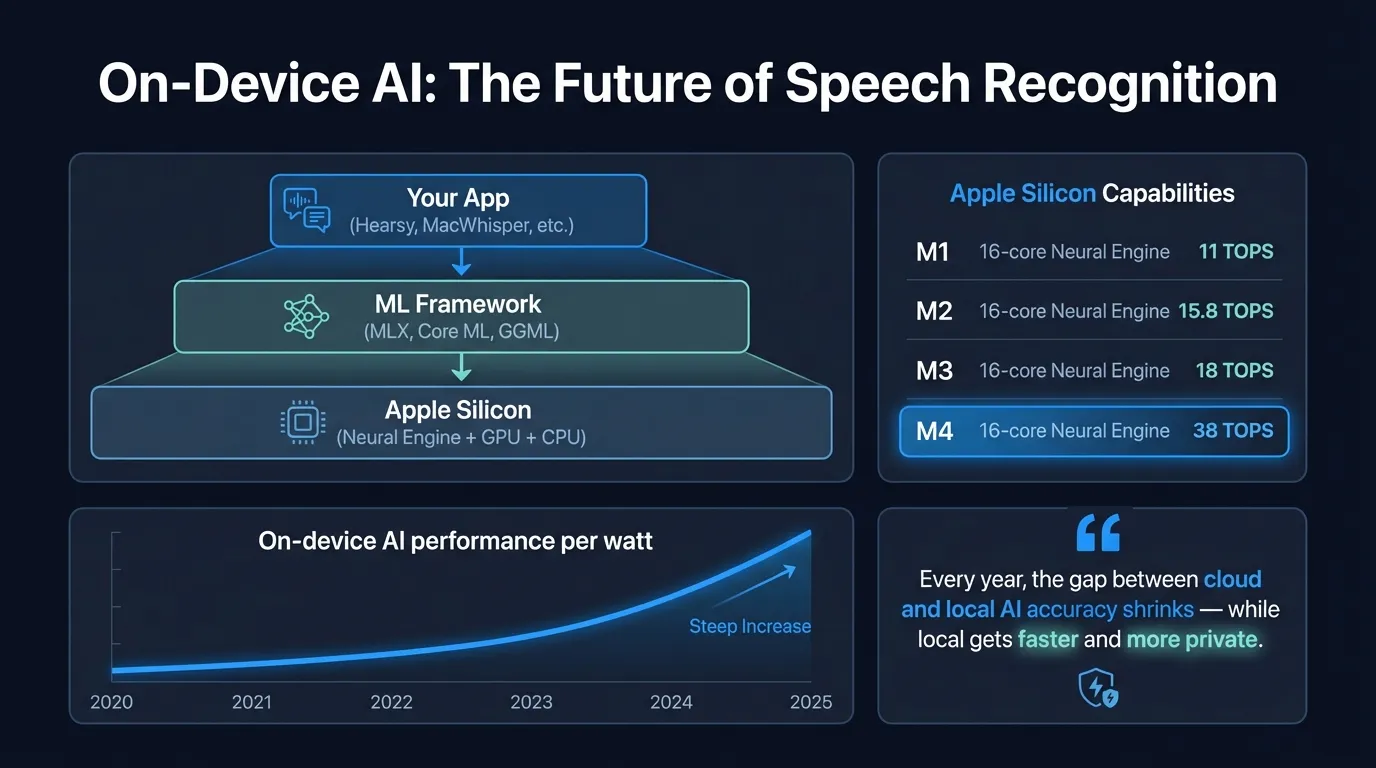

Apple Silicon changed what's possible on a laptop. The Neural Engine — a dedicated ML inference accelerator built into every M-series chip — is the reason.

When Apple released M1 in late 2020, the Neural Engine ran at 11 trillion operations per second (TOPS). The M2 reached 15.8 TOPS. The M4, released in 2024, runs at 38 TOPS (Apple, 2024). The M5, released in late 2025, pushed further with unified memory bandwidth of 153GB/s (Apple, 2025).

This matters for speech because neural network inference is memory-bandwidth-bound at the scales speech models operate. A model like Whisper Large — 1.5 billion parameters — needs to load those parameters from memory during inference. Higher bandwidth means lower latency.

The Neural Engine is also designed for the matrix multiply operations that transformers rely on — the architecture underlying Whisper, Parakeet, and most modern speech models. A CPU runs these operations generically. The Neural Engine runs them natively, with dedicated hardware for the exact computation pattern speech models require.

The result: models that required a server-grade GPU in 2021 now run at real-time speed on a MacBook.

The model story#

Two models power most on-device speech recognition on Mac:

OpenAI Whisper was released in 2022 and trained on 680,000 hours of multilingual audio. The Large V3 variant achieves 2.7% word error rate on the LibriSpeech clean benchmark (OpenAI, 2023). It supports 99 languages and runs at approximately real-time speed on M2 and later chips using Metal acceleration. Whisper's strengths are language coverage and handling of accented speech and difficult audio conditions.

NVIDIA Parakeet TDT 0.6B v2 is English-only and optimized for speed. On LibriSpeech clean, it achieves 1.69% WER (NVIDIA, 2025) — lower error rate than Whisper on English, at significantly faster inference speed. On Apple Silicon with CoreML optimization, Parakeet processes audio in under 50ms. A CoreML-converted version from FluidInference brings this performance natively to Apple platforms without requiring CUDA.

Both are open models. The weights are downloadable, inspectable, and run on consumer hardware without any server component.

How on-device AI transcription works#

When you press a hotkey in a local dictation app, the sequence is:

- The microphone captures audio and buffers it in RAM

- When you stop speaking, the audio buffer is passed to the speech model

- The model — loaded into RAM from disk on first use — runs inference on the audio

- The model outputs a text transcription

- The audio buffer is cleared

No audio travels over a network at any point. Computation happens entirely on the local processor — CPU, GPU, or Neural Engine, depending on what the app uses and what's available.

For Parakeet on Apple Silicon via CoreML, inference completes in under 50ms — text appears before you consciously notice any gap. For Whisper Large, inference takes 1-3 seconds for a typical sentence, depending on chip generation and model variant.

The model itself is a file on disk. Parakeet weighs around 600MB. Whisper Large V3 is around 3GB. Once downloaded, the app is entirely self-contained.

The broader shift#

The migration from cloud to edge inference isn't unique to speech. It's happening across AI categories — image recognition, natural language processing, sensor data — because the same forces apply: faster hardware, more efficient models, reduced model sizes via quantization, and growing concerns about data transmission costs and regulatory exposure.

For voice specifically, AMD's CTO has predicted that the majority of AI inference will move outside the cloud by 2030. The 2025 data already shows on-device processing at 59.47% of the speech recognition chip market — a majority position that's been growing, not emerging.

Several forces are driving this:

Latency. Cloud transcription requires a round-trip to a server. On a good connection, that's 100-300ms of added delay. On-device inference on Apple Silicon is under 50ms for Parakeet. For real-time dictation, latency is the experience — the difference between text appearing as you speak versus a noticeable pause.

Regulatory pressure. Financial and healthcare regulations in the EU and US impose requirements on where data can be transmitted and stored. Audio containing patient information, legal strategy, or financial details is subject to HIPAA, GDPR, and sector-specific rules. Local processing simplifies compliance substantially: data that never leaves the device doesn't trigger most transmission and storage requirements.

Privacy expectations. The 2019 revelations that Apple, Google, and Amazon employed contractors to review voice assistant recordings — without most users' knowledge — shifted what sophisticated users accept as a default. For sensitive workloads, cloud audio transmission has moved from a default to a risk to evaluate.

Reliability. Cloud services have outages. When a provider's infrastructure fails, every app depending on it stops working simultaneously. On-device reliability depends only on your local hardware.

Your Voice, Your Mac, Your Data

Hearsy processes everything on-device. Your voice never leaves your Mac — not even for a millisecond.

What Apple's own investment signals#

Apple's platform moves reflect where they believe the market is going.

In 2025, Apple introduced SpeechAnalyzer — a new on-device speech recognition framework replacing older APIs — alongside a new proprietary Apple model. According to Argmax (the company that built WhisperKit, an optimized Whisper implementation for Apple platforms), Apple's new model matches mid-tier Whisper models on long-form conversational transcription.

This is notable because Apple isn't competing with OpenAI or NVIDIA on model benchmarks — they're building on-device speech capability into the OS itself. The direction is clear: Apple expects on-device speech recognition to be the default, and is investing in infrastructure to support that at the platform level.

The Neural Engine's trajectory reinforces this. It's not a software feature Apple can add later — it's dedicated silicon, which requires architectural commitment years in advance. Building 38 TOPS of ML inference into a consumer laptop chip is a statement about where Apple expects compute to happen.

The honest trade-offs#

On-device speech recognition is competitive, but not uniformly better than cloud.

Where local leads: Speed (under 50ms for Parakeet), privacy (audio stays on-device), offline capability (works on planes, restricted networks), reliability (no server dependency), and regulatory compliance simplification.

Where cloud still leads: Heavily accented speech, high background noise, and specialized domain vocabulary (medical, legal terminology). Cloud services process vastly more diverse training data than any public model, and continuously update. The training data advantage is real on difficult audio.

Language coverage: Whisper's 99 languages sounds comprehensive — and it is for mainstream languages. For less-common languages, cloud services maintain larger fine-tuned models with more training data. On-device accuracy for uncommon languages can trail cloud by a meaningful margin.

For English dictation — clear speech in standard conditions — local models match cloud on every dimension that matters for daily use.

| Factor | On-Device | Cloud |

|---|---|---|

| Latency (English) | Under 50ms (Parakeet) | 100-300ms |

| Accuracy (clean speech) | 1.69% WER (Parakeet) | Comparable |

| Accuracy (difficult audio) | Lower | Higher |

| Privacy | Audio stays on-device | Audio transmitted |

| Offline capability | Yes | No |

| Language coverage | 99 (Whisper) | Broader |

| Reliability | Local hardware only | Server dependency |

| Network required | No | Yes |

What this looks like in practice#

On a current Mac with Apple Silicon, on-device dictation works like this: press a hotkey, speak, text appears at the cursor in whatever app is active. For Parakeet, the transcription completes during the phrase — by the time you finish a sentence, the text is already inserted. No waiting. No connection. No audio leaves the machine.

Apps like Hearsy use Parakeet for English (under 50ms, 1.69% WER) or Whisper for multilingual dictation — both running entirely on-device. Optionally, the app can apply AI cleanup as a post-processing step, which can also run locally via models like Qwen or through a cloud API if preferred. The base transcription path is entirely self-contained.

Setting up local dictation on a Mac takes about ten minutes: download the app, complete onboarding (which includes model downloads — Parakeet is around 600MB, Whisper Large is around 3GB), grant microphone and accessibility permissions, set a hotkey. From that point on, the app works in airplane mode and leaves no audio on external servers.

The shift from cloud to on-device for speech recognition isn't a future state. The hardware that makes it practical — M-series Neural Engine — has been in MacBooks since 2020. The models that match cloud accuracy — Whisper, Parakeet — have been available since 2022 and 2025 respectively. The 59.47% market share figure shows the transition is already well underway.

The cloud was never the destination. It was a workaround while the hardware caught up. On Apple Silicon, it has.

For the privacy implications of on-device vs. cloud processing, see voice data privacy. For offline capability specifically, see offline dictation software. For compliance considerations in healthcare and legal contexts, see the HIPAA and GDPR voice dictation guide. For a broader comparison of local vs. cloud transcription approaches, see AI transcription: local vs. cloud.

Frequently asked questions#

What is on-device speech recognition?#

On-device speech recognition runs the speech AI model locally — in RAM on your CPU, GPU, or Neural Engine — rather than on a remote server. Audio is captured by your microphone, processed by the model running on your device, and converted to text without any data leaving your machine. Apps like Hearsy, SuperWhisper, and MacWhisper on Mac use this approach with Whisper or Parakeet models. The model is downloaded once; transcription requires no internet connection.

Is on-device speech recognition as accurate as cloud?#

For standard dictation — clear speech in a moderately quiet environment — yes. NVIDIA Parakeet TDT 0.6B v2 achieves 1.69% word error rate on the LibriSpeech clean benchmark (NVIDIA, 2025). OpenAI Whisper Large V3 achieves 2.7% WER on the same benchmark (OpenAI, 2023). Cloud services retain an accuracy advantage specifically on difficult audio: heavily accented speech, high background noise, and specialized terminology. For typical daily dictation, the gap is not noticeable.

What makes Apple Silicon good for on-device AI?#

Every M-series chip includes a Neural Engine — dedicated ML inference hardware. The M4 Neural Engine runs at 38 trillion operations per second (Apple, 2024). This handles the matrix multiply operations that transformer-based speech models use far more efficiently than a general CPU. Combined with Apple Silicon's unified memory architecture — CPU, GPU, and Neural Engine share the same memory pool — models load faster and run with lower latency than on CPU-only systems.

Which apps use on-device speech recognition on Mac?#

Hearsy, SuperWhisper, and MacWhisper all process speech locally. Hearsy uses Parakeet for real-time English dictation (under 50ms) or Whisper for 99 languages, and supports continuous system-wide dictation via hotkey. SuperWhisper has a similar workflow. MacWhisper is better suited for transcribing recorded audio files rather than live dictation. macOS built-in dictation also uses on-device processing on Apple Silicon Macs, though it stops after around 30 seconds of continuous speech.

What is local AI transcription?#

Local AI transcription converts audio to text using an AI model that runs entirely on your device. No audio is sent to a server. The model — typically Whisper or Parakeet — is downloaded once and stored locally. During transcription, audio is processed in RAM and converted to text without any network request. This makes transcription work offline, keeps voice data private, and eliminates latency from cloud round-trips. You can verify it with a network monitor: zero outbound connections during transcription means local processing.

Ready to Try Voice Dictation?

Hearsy is free to download. No signup, no credit card. Just install and start dictating.

Download Hearsy for MacmacOS 14+ · Apple Silicon · Free tier available